Real-time light source estimation in realistic scenes using the Kinect sensor

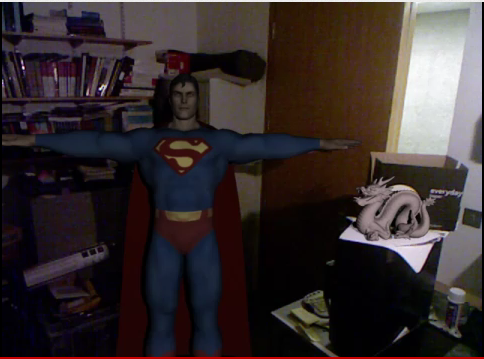

A method for estimating a point light source position in a scene recorded by the Kinect sensor is presented. The image and depth information from the Kinect is enough to estimate a light position in the scene which allows for the rendering of synthetic objects into a scene that look realistic enough for augmented reality purposes. Our method does not require a probe object or other device. To make this method suitable for augmented reality, we developed a GPU implementation that currently runs 1 fps. This is in most scenarios good enough because both the position of the light source and the position of the Kinect are usually fixed. A dataset is recorded to evaluate the performance of our method, where the method is able to estimate the angle of the light source with an average error of 10 degrees. Results using synthetic objects rendered into the recorded scene show that this accuracy is good enough for the rendered objects to look realistic.

How to cite:

Related publications:

Interactive light source position estimation for augmented reality with an RGB-D camera. Boom, B.J., Orts-Escolano, S., Ning, X.X., McDonagh, S., Sandilands, P. & Fisher, R.B. (2015) Computer Animation and Virtual Worlds, DOI: 10.1002/cav.1686

Bibtex

Point Light Source Estimation based on Scenes Recorded by a RGB-D camera. Boom, B., Orts-Escolano, S., Ning, X., McDonagh, S., Sandilands, P. & Fisher, B.R. (2013), BMVA Press

Bibtex PDF